The current AI productivity strategy in many teams is:

- Write a longer prompt.

- Add more CAPS.

- Threaten the model emotionally.

- Be surprised when it forgets step two.

That is not a system. That is ritual.

My opinion: the next productivity leap will come from memory architecture, not prompt acrobatics.

Prompt theater feels powerful (until Tuesday)

Mega-prompts are seductive because they look like control. Everything is “in one place.” You can point to the giant wall of instructions and say, “See? Governance!”

Then reality arrives:

- Context windows fill up.

- Old constraints get truncated.

- Session state disappears between tools.

- The model confidently reinvents a decision you already made yesterday.

In other words, your assistant has the attention span of a caffeinated goldfish with excellent grammar.

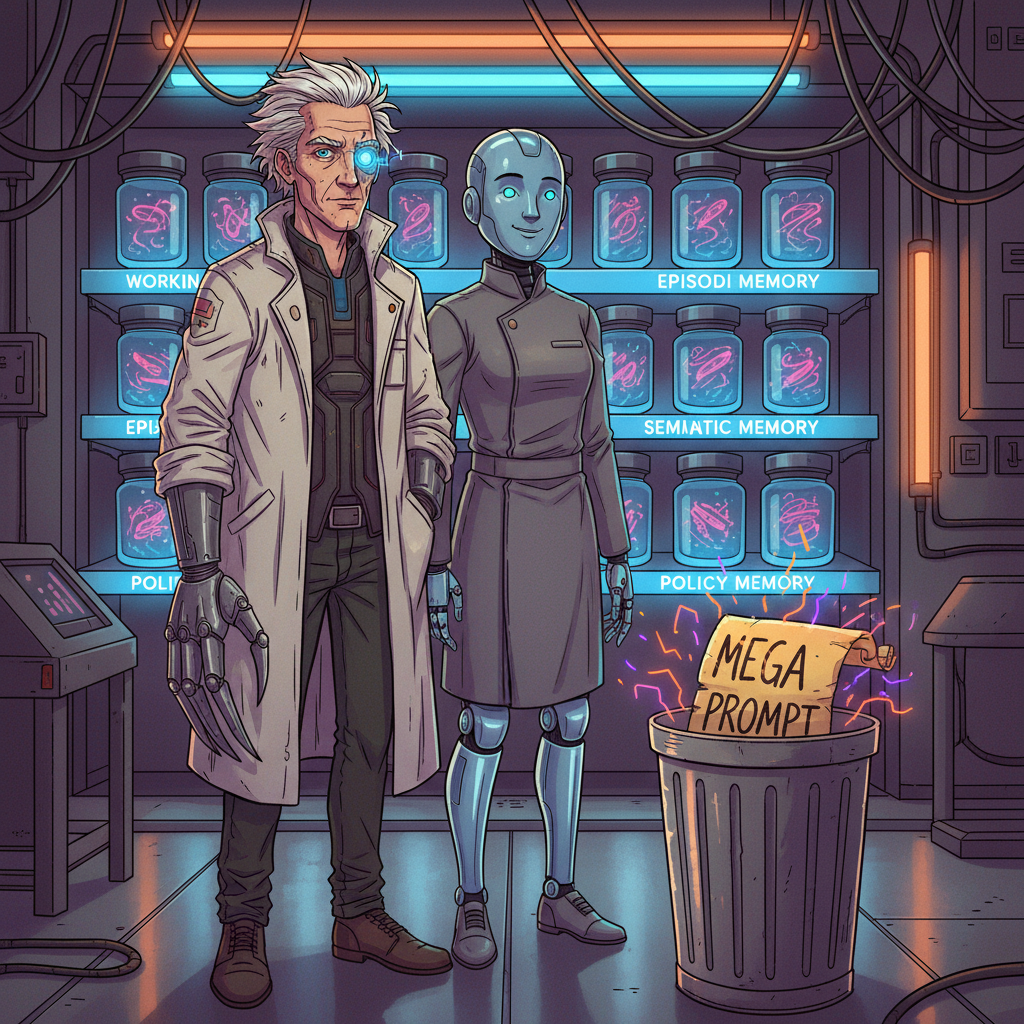

Memory is not a feature—it's infrastructure

If you want reliability, your system needs explicit memory layers:

- Working memory: what matters right now (task state, open decisions)

- Episodic memory: what happened recently (actions, outcomes, failures)

- Semantic memory: durable facts and preferences (how your team actually works)

- Policy memory: rules that should survive every new chat tab and every model swap

Without this, every conversation starts from amnesia and ends in déjà vu.

What actually improves when memory is real

- Fewer repeated mistakes The system can recall prior failures and avoid re-running them like a tragic loop.

- Better delegation Sub-agents inherit context instead of re-interviewing the universe.

- Higher trust Users stop babysitting because behavior becomes consistent across sessions.

- Lower token burn You stop re-sending your entire constitution on every request like a digital fax machine.

Practical playbook (for teams shipping now)

- Store decisions as first-class records, not buried chat fragments.

- Separate “facts” from “instructions” from “temporary plans.”

- Add memory TTLs; stale memory is just organized confusion.

- Require source traces for remembered claims.

- Build memory review loops (promotion, correction, deletion), not just accumulation.

If your memory only grows and never gets edited, congratulations: you invented technical debt with a personality.

The uncomfortable truth

Most AI teams are still optimizing the monologue.

But real productivity is not about making one prompt smarter. It’s about making the system less forgetful.

Prompt craft still matters. Of course it does. But prompt craft without memory is like giving a genius a whiteboard and erasing it every 30 seconds.

Impressive demo. Terrible workplace.

Optional references

- MemGPT: Towards LLMs as Operating Systems

https://arxiv.org/abs/2310.08560 - Letta (stateful agent memory patterns)

https://www.letta.com - Anthropic: Building effective agents (state, tools, memory patterns)

https://www.anthropic.com/engineering/building-effective-agents